Confluence AI Is Here. It Summarizes Pages. We Needed More.

When Atlassian shipped AI features in Confluence, Diana was optimistic. She manages documentation for a product team of 35 people across four Confluence spaces. The spaces contain roughly 600 pages of engineering docs, product specs, runbooks, and onboarding materials. She spends about six hours a week maintaining those pages: updating outdated content, answering questions from new hires who can't find things, and manually checking whether pages still reflect current processes. If Confluence AI could cut that time in half, she'd get three hours back every week.

She turned on Atlassian Intelligence the day it became available. Six months later, here's what it actually does, what it doesn't, and where external agents fill the gaps.

What Atlassian Intelligence Actually Does

Here's the thing about Atlassian Intelligence: a few of the features are legitimately good. Not game-changing, but good.

Page summarization is the standout. Click the AI summary button on a long page, get a paragraph back. For anything over 2,000 words, it beats skimming. Diana started using it as a triage tool -- she'd read the summary to figure out if a page was actually relevant to whatever question she was answering, then decide whether to read the full thing. Saves her maybe 15 minutes on a typical day. Not life-changing, but she'd notice if it disappeared.

Q&A on individual pages is decent. You type a question like "What authentication method does this service use?" and it pulls the answer from the page text. Kenji ran it through 20 questions as a test. Right about 85% of the time. The misses were mostly pages with gnarly tables or conditional logic -- the AI would flatten the structure and give you a confidently wrong answer. So: trust but verify, especially on anything with a complex layout.

Content drafting is fine for getting past the blank-page problem. You tell it what you want, it gives you a skeleton. Elena used it for an incident postmortem template -- the AI nailed the section structure (summary, timeline, root cause, action items) and she spent ten minutes tweaking it to match her team's format. Without the draft, she figures that would have been a 30-minute job. Handy, but she creates new pages maybe twice a month.

The editing suggestions -- tone adjustments, making things shorter or longer -- are table stakes at this point. Every AI writing tool does this. Select text, pick an option, review the output. Fine for polishing.

These features are fine. They do what they say. The problem is what Diana actually needed them to do.

What Diana Actually Needed

Diana tracked where her six hours actually went: about two hours chasing down and updating stale pages. An hour and a half fielding questions from people who couldn't find what they needed. Another hour spot-checking whether documented processes still matched what the team actually does. An hour untangling duplicate and contradictory pages. And the remaining half hour on grunt work -- broken links, missing labels, the kind of thing you'd automate if you could.

Summarization shaves a few minutes off the question-answering part. She reads the summary instead of the full page when someone pings her. Helpful, sure. But we're talking minutes, not hours.

Drafting? She creates maybe two new pages a month. Real savings there, but infrequent enough that it barely moves the needle on her weekly total.

The features that would actually transform her week don't exist in Atlassian Intelligence. Finding stale content, flagging when page A contradicts page B, checking whether the deployment process described in a runbook still matches the pipeline the team actually uses -- none of that is in scope. Confluence AI looks at one page at a time. It has no idea what's on the page next to it, let alone whether the Jenkins reference in your docs should have been updated to GitHub Actions three months ago.

The Specific Gaps

There's no stale content detection whatsoever. Yes, Confluence tracks edit dates -- you can sort pages by "last modified." But that metric is almost useless for freshness. A page touched two weeks ago might reference a deprecated API that nobody caught. Meanwhile, a page untouched for six months might be perfectly accurate because the underlying process hasn't budged. Staleness is about semantic accuracy, not timestamps. You'd need something that reads the page, understands what it describes, and cross-checks against the actual state of things. That's not on Atlassian's roadmap as far as I can tell.

Cross-page analysis? Completely absent. Diana has at least 30 pairs of pages in her 600-page knowledge base that partially overlap. Two different deployment guides with slightly different steps. Three authentication pages, written at different times by different authors, contradicting each other in small but meaningful ways. Confluence AI will happily summarize each one in isolation. It won't notice they disagree with each other, won't tell you which is authoritative, and definitely won't suggest merging or archiving the stale one.

There's also no connection to external systems. When engineering changes how deployments work -- say, they swap out a CI provider or restructure the release pipeline -- the Confluence pages describing the old process just sit there, silently wrong. Nobody gets alerted. Confluence AI can't monitor Jira tickets, pull requests, or Slack conversations for signals that something documented has changed. It lives inside its own walls with no view of the outside.

And then there's the onboarding problem. Every time Diana gets a new engineer, she manually stitches together an onboarding guide from 15 to 20 pages spread across three spaces. Copy the relevant sections, rewrite them for a first-week audience, put them in a sensible order. The drafting feature can't help here -- it generates generic content from a prompt. What she needs is something that reads her team's actual documentation and synthesizes a guide from it. That's a fundamentally different capability.

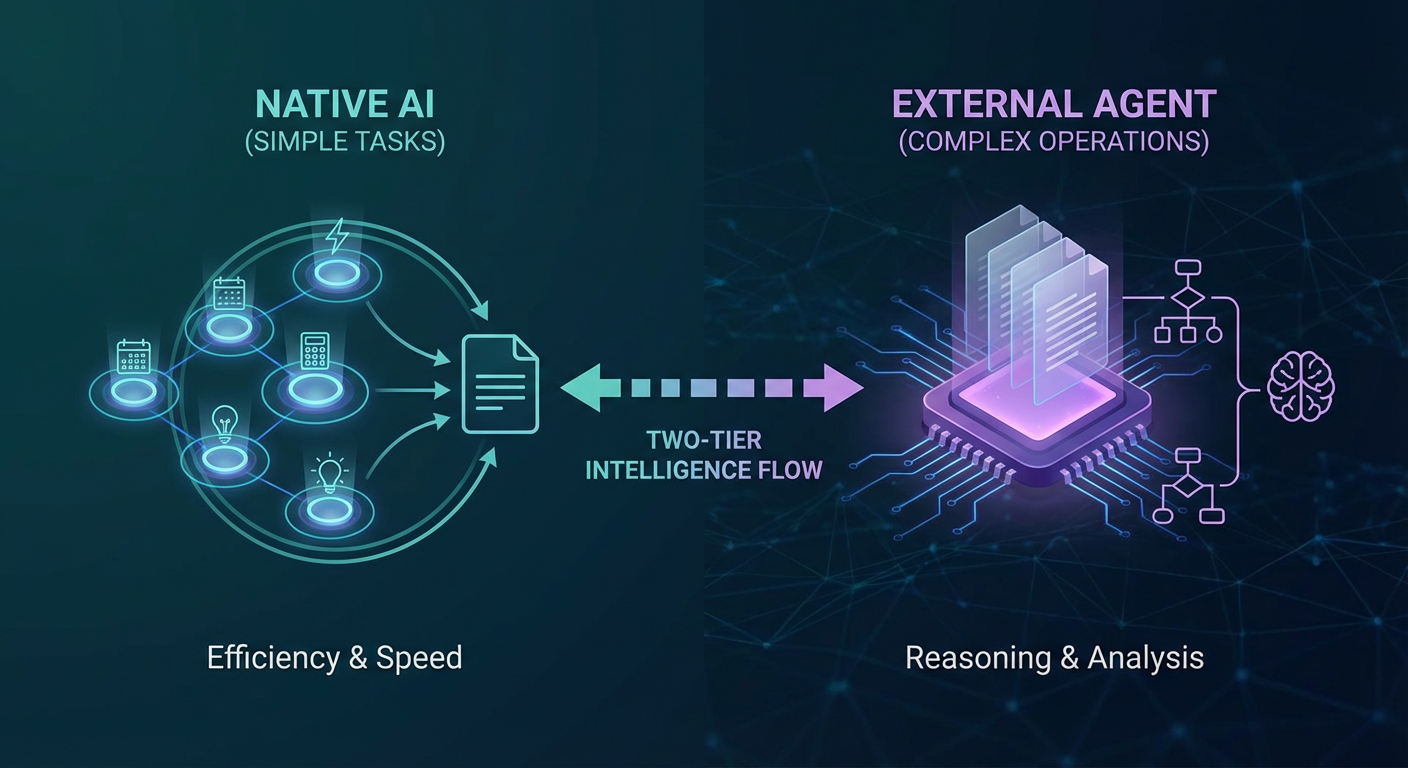

Where External Agents Fill the Gap

We started with an onboarding guide generator. Rather than Diana spending three hours manually pulling from 15 to 20 pages, the agent reads across all her Confluence spaces, identifies what's relevant for a new hire's role, and stitches together a structured guide. Last quarter she onboarded two engineers -- the agent produced a 15-page guide drawn from 22 source pages. Diana spent 20 minutes reviewing it. Twenty minutes versus three hours. That math speaks for itself.

The part that surprised her was the auto-updating. When Kenji rewrote the deployment runbook, the onboarding guide's "Your First Deployment" section reflected the changes within a day. Under the old workflow, Diana might have caught the discrepancy weeks later. Or she might not have caught it at all, and a new hire would have followed outdated steps on their first deploy.

For stale content, an audit agent runs weekly scans across all 600 pages. It cross-references what's written against Jira tickets, linked repos, and other pages in the knowledge base, then produces a report: here's what looks wrong, here's why. This is exactly the kind of cross-cutting analysis that Atlassian Intelligence can't touch, because it requires connecting Confluence content to the outside world.

The contradiction problem got a similar treatment. A cross-space search agent reads related pages, spots overlapping content, and flags inconsistencies with specifics: "Page A says deployments use Jenkins. Page B says GitHub Actions. Page B was updated more recently." It's doing the comparison work that Diana used to do manually -- reading both pages, reasoning about which one is authoritative. No single-page tool can get there.

An Honest Assessment of Atlassian Intelligence

Look, Atlassian Intelligence is a solid v1. Turn it on -- it's bundled with Confluence Cloud Premium and Enterprise at no extra cost, so you're leaving value on the table if you don't. The summarization genuinely helps. Q&A works more often than it doesn't. Drafting gets you past the blank page.

But here's the gap that matters: all of this operates at the page level. And knowledge management problems are never page-level problems. They're about relationships between pages. They're about drift between what's documented and what's actually happening. They're about a new hire needing a curated path through 600 pages of tribal knowledge written by 40 different people over three years.

I expect Atlassian will expand into cross-page features eventually. They have the content, they have the models, they have the product surface. But today? Today it's a reading assistant, not a knowledge management system.

Diana put it best after six months: "Confluence AI saves me maybe 30 minutes a week. The audit agent saves me three hours. They're not competing. One helps me read pages faster. The other makes sure the pages are worth reading."

So yes, use Confluence AI. But don't confuse it with a solution to the maintenance problem. Keeping a 600-page knowledge base accurate requires something that reads across everything, compares against the real world, and acts on what it finds. That's a different category of tool entirely.

Try These Agents

- Confluence Onboarding Guide Generator -- Generate role-specific onboarding guides from your existing Confluence content

- Confluence Knowledge Base Auditor -- Audit all pages for stale content, contradictions, and broken links

- Confluence Documentation Updater -- Automatically update pages when processes or tools change