Google Analytics Data API: Build It Yourself or Let an Agent Handle It

Marcus, our analytics engineer, disappeared for three weeks. When he resurfaced, he had a Python pipeline that pulled GA4 Data API numbers, ran them through a transformation layer, and dropped formatted reports into a Google Sheet. The thing actually worked. A cron job on an EC2 instance kicked it off every Monday at 6 AM. By the time people were pouring coffee, the reports were sitting in the shared drive.

Then Marcus went on vacation. Day four, the whole thing died. Google had rotated the service account credentials. Marketing stared at the error logs like they were written in Sumerian. Two weeks of pulling numbers by hand until Marcus flew back, spent an afternoon untangling the auth flow, and resurrected it.

That cycle repeated three more times over the next six months. Not always credentials. Sometimes the GA4 property structure changed. Once it was a breaking change in the Google Analytics Data API v1beta that renamed a dimension. Once the EC2 instance ran out of disk space because nobody had set up log rotation.

Marcus writes clean code. That was never the issue. The issue was that we'd built a little piece of infrastructure that demanded feeding and watering, and the people who actually needed the reports couldn't change a tire on it.

What the GA4 Data API Actually Does

Quick primer on what this thing actually is, since I've seen people confuse it with the Management API or the old Universal Analytics API. The GA4 Data API lets you pull report data from your GA4 property through code. You fire off a request with the metrics you want (sessions, users, conversions, revenue), the dimensions to slice by (source, medium, country, page path), a date range, and maybe some filters. Back comes structured data.

It is genuinely well-designed. The request format is clean. The documentation is thorough. You can pull almost anything that appears in the GA4 interface, plus combinations that the interface does not surface natively. Want sessions by source grouped by hour of day for the last 90 days, filtered to organic traffic only? The API can do that in a single request.

The difficulty is not in making API calls. The difficulty is in everything around the API calls.

The Real Cost of Building It Yourself

Marcus's three-week project broke down roughly like this. Authentication and credential management ate about four days. Google's OAuth2 flow for service accounts? Thoroughly documented. Also surprisingly annoying. You need a service account, a JSON key file, the right IAM permissions on the GA4 property, a token refresh mechanism. First-time setup goes smoothly enough. Then six weeks later something rotates or a permission changes and you're back in the weeds.

Report configuration burned another three days. The question sounds simple: which metrics, which dimensions, what date ranges, what filters? Marcus hard-coded the first version. Big mistake. Every time marketing wanted a new metric, he had to crack open the script. So he built a YAML config file. Then Elena asked for a year-over-year comparison by week, which needed completely different date logic from the week-over-week setup. There went another afternoon.

Data transformation ate nearly a week. Raw API responses are nested JSON objects. Turning those into formatted spreadsheet rows with proper headers, percentage calculations, and week-over-week deltas required a transformation layer. Handling edge cases (what if a dimension has zero sessions this week but had sessions last week?) added complexity that was not obvious upfront.

Last chunk: delivery and scheduling. Cron job setup, Google Sheets API for output, error handling so failures wouldn't just vanish into the void. Marcus wired up a Slack notification for failures. Smart move. Except the Slack webhook URL expired after 90 days and, you guessed it, nobody noticed.

Grand total: about 120 hours of engineering time. For a report that a human can produce manually in 45 minutes per week. Let that ratio sink in.

Where DIY Pipelines Break Down

The ongoing maintenance is where the math stops working. Marcus estimated he spent about two hours per month maintaining the pipeline after the initial build. That does not sound like much until you factor in the unplanned outages. Credential rotations. API version changes. Infrastructure issues. Each one cost somewhere between 30 minutes and half a day, and they always happened when Marcus was busy with something else.

Iteration was the real killer though. Priya wanted conversion funnel data in the weekly report. In a dashboard tool, she'd drag a widget over. In Marcus's pipeline? New API calls. New transformation logic. New spreadsheet columns. End-to-end testing. What should have been a 15-minute request ballooned into three hours of work.

By month six, the pipeline was serving one static report format. The marketing team had stopped asking Marcus for changes because they felt bad about taking his time. The tool had calcified.

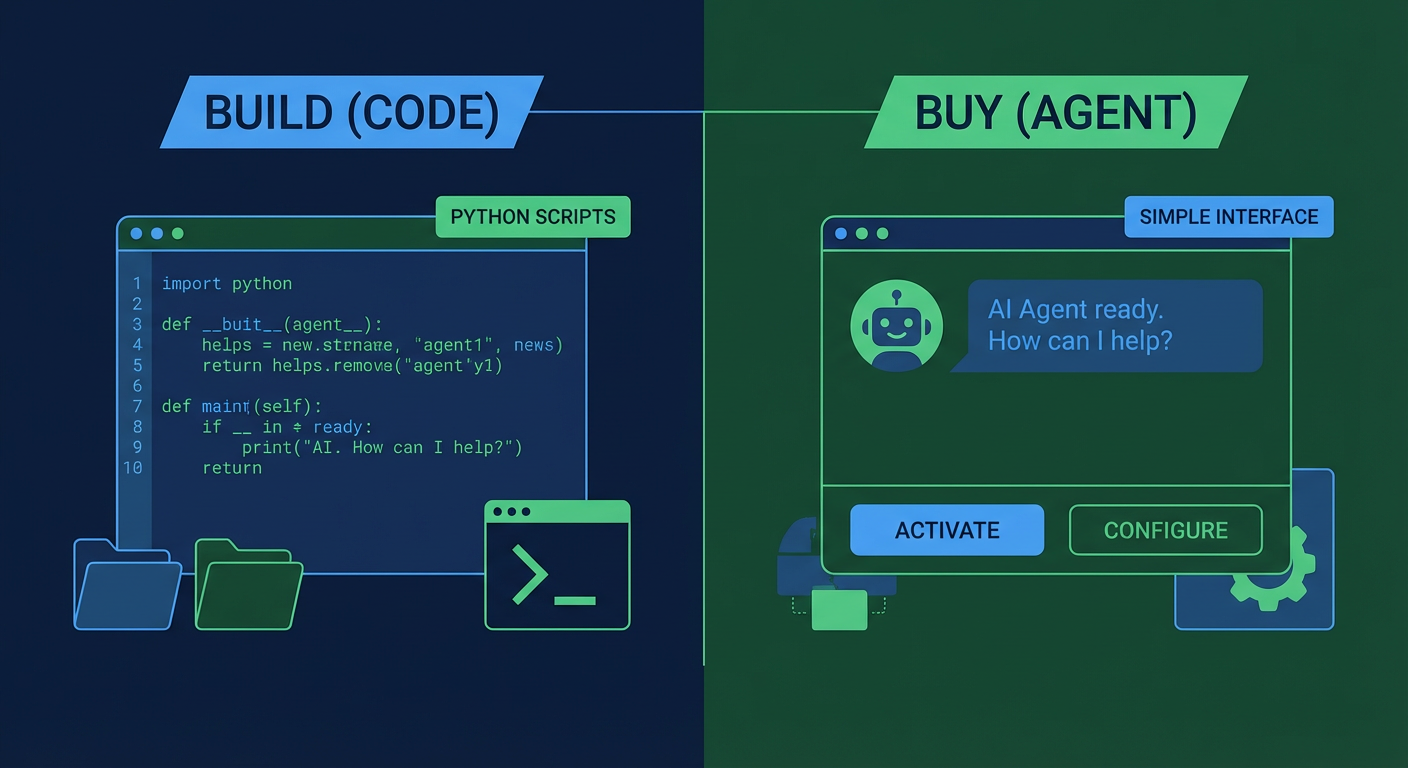

What an Agent Does Differently

An AI agent with GA4 Data API access skips the three-week build entirely. You just tell it what you want. "Sessions by source, last seven days versus the prior seven, with bounce rate and session duration." The agent works out which endpoints to hit, builds the requests, deals with pagination when the response is huge, and formats everything.

The GA4 Channel Attribution Analyzer is a good example. It pulls multi-touch attribution data from the API, breaks down how different channels contribute to conversions across the funnel, and writes a summary that a non-technical marketer can actually read. Building the equivalent as a custom pipeline would take days of work on the attribution modeling alone.

The difference shows up most clearly when requirements change. "Can you also include revenue by channel?" In a custom pipeline, that is a code change, a test, a deploy. With an agent, it is a sentence added to the prompt. Elena tested this herself. She asked the agent for traffic by device category, then five minutes later asked for the same data filtered to paid channels only. Both requests worked on the first try. No code. No deploy.

The Honest Trade-offs

I am not going to pretend agents are better in every scenario. They are not.

If you need sub-second latency on API calls that feed a production application, you should build a custom pipeline. Agents are not optimized for real-time data processing. Strict compliance requirements about where data gets processed and stored? A self-hosted pipeline gives you more control over that data path. Got a dedicated analytics engineer who genuinely likes building and maintaining data infrastructure? (They exist. Marcus is one.) The custom approach might honestly be the right call.

But for the use case that 90% of marketing teams actually have, which is "pull GA4 data weekly, format it nicely, send it to people who need it," an agent wins on every dimension that matters. Setup time: minutes instead of weeks. Maintenance: zero instead of two-plus hours per month. Iteration speed: immediate instead of "file a ticket and wait."

Kenji on our team made the comparison that stuck with me. "Building a custom GA4 pipeline is like buying a car to get groceries. You can do it, and the car works great. But if you just need groceries, there are simpler options."

A Middle Ground That Works

Some teams split the difference. Agent handles weekly reporting, ad hoc questions, random one-off data pulls. A stripped-down custom pipeline handles only the specific feeds that absolutely must run in production with guaranteed uptime and hard SLAs.

Tomás runs our paid media team and uses this split. The agent handles his weekly channel performance reports and any exploratory questions about GA4 data. A simple data pipeline handles the nightly feed of conversion data into their bidding platform, because that feed needs to be fast, reliable, and running at 2 AM without human involvement.

His rule of thumb is dead simple. Output goes to a human who reads it? Agent. Output feeds another system that chews on it programmatically? Pipeline.

Marcus? Relieved. He killed the EC2 instance, archived the Python scripts, and got back two hours a month plus all the mental overhead of being the only human who could fix the Monday morning report when it inevitably broke. Marketing got a tool they could actually iterate on without filing engineering tickets. Win-win, for real this time.

Try These Agents

- GA4 Channel Attribution Analyzer -- Break down multi-touch attribution across channels and understand which sources actually drive conversions

- GA4 Weekly Traffic Report -- Automated weekly traffic summary with trends and anomaly detection delivered to Slack

- GA4 Ecommerce Performance Tracker -- Pull revenue, product performance, and conversion data from GA4 automatically

- GA4 Content Performance Auditor -- Audit which content drives traffic and conversions based on GA4 data