We Automated Our Supabase Data Ops. Our Engineers Stopped Writing CRUD Scripts.

Priya is an ops engineer who writes scripts for a living. Not the kind that ship as features. The kind that fix things. A CSV import went sideways, so she writes a script to patch 2,000 records. New pricing tier goes live, so she writes a migration script for existing customers. Marketing wants duplicate contacts cleaned up, so she writes a dedup script for the contacts table. Someone changed a column type and forgot to backfill the nulls, so she writes a script for that too.

Priya's team keeps these scripts in a folder called data-ops/. Last time I counted, there were 67 files in it. The oldest was from 14 months ago. The newest was from yesterday. Maybe 40% of them still work. The rest point at columns that got renamed, tables that got restructured, or API endpoints that got deprecated months ago.

This is the story of how Priya's team stopped writing those scripts.

The Script Lifecycle

Every ops script follows the same lifecycle. It starts as an urgent request. Someone drops a message in Slack: "Hey, we need all active subscriptions updated with the new billing code before EOD." Priya fires up her editor, bangs out a Node script that connects to Supabase, queries the subscriptions table, filters for active ones, patches the billing code. She tests against a handful of records, runs it in production, eyeballs the results, and moves on.

Total time: about two hours. No big deal, right?

The problem is frequency. Priya gets three or four of these requests per week. Some are one-time fixes. Some recur monthly (like the "update pricing" script that runs every quarter but needs tweaks each time because the schema changed). Some start as one-time fixes and quietly become recurring because the underlying data quality issue was never actually fixed.

Over a year, those two-hour scripts add up to 300-400 hours of engineering time spent on data janitorial work. That's almost two months of full-time work, done by an engineer who could be building features, improving infrastructure, or — novel idea — actually fixing the root causes of the data quality issues.

Why Scripts Accumulate

The obvious question is: why not build proper tooling instead of one-off scripts?

Priya has tried. She's built internal admin dashboards with bulk update forms. She's set up cron jobs for recurring cleanup tasks. She's wired up database triggers to catch bad data before it gets in.

Every one of these solutions works great for the specific problem it was built to solve. The admin dashboard covers the five bulk operations that were most common six months ago. The cron jobs clean up the three data patterns that caused issues last quarter. The triggers catch the four types of invalid data that caused outages last year.

But new problems keep showing up. The business changes, the schema evolves, and new data quality issues emerge that don't fit the existing tooling. So Priya writes another script. And another. And the folder grows.

The root issue is that data operations are inherently ad-hoc. You have no idea which table will need a bulk update next month, what flavor of dedup logic will be required, or which external system will start sending garbage data. Building custom tooling for every conceivable scenario is a treadmill with no end. Writing one-off scripts is the reasonable response to unpredictable requirements.

But "reasonable" and "good" aren't the same thing.

The Turning Point

The turning point for Priya's team came when three things happened in the same week.

First, a script from four months ago was re-run by a junior engineer who didn't realize the subscriptions table had been restructured. The script updated the wrong column on 800 records. Fixing the damage took a full day.

Second, Priya burned an entire afternoon writing a dedup script for the contacts table, only to discover an almost-identical script already sitting in the folder. Different filename, written by a developer who'd left six months ago, and -- insult to injury -- it used a matching algorithm that was actually better than the one she'd just finished.

Third, finance asked for a monthly reconciliation between Supabase and their accounting system. Priya started scoping the script and quickly counted twelve distinct edge cases. A week of work, minimum, for something that runs once a month and would almost certainly break within three months when the accounting system's API inevitably changed.

That's when she decided to try something different.

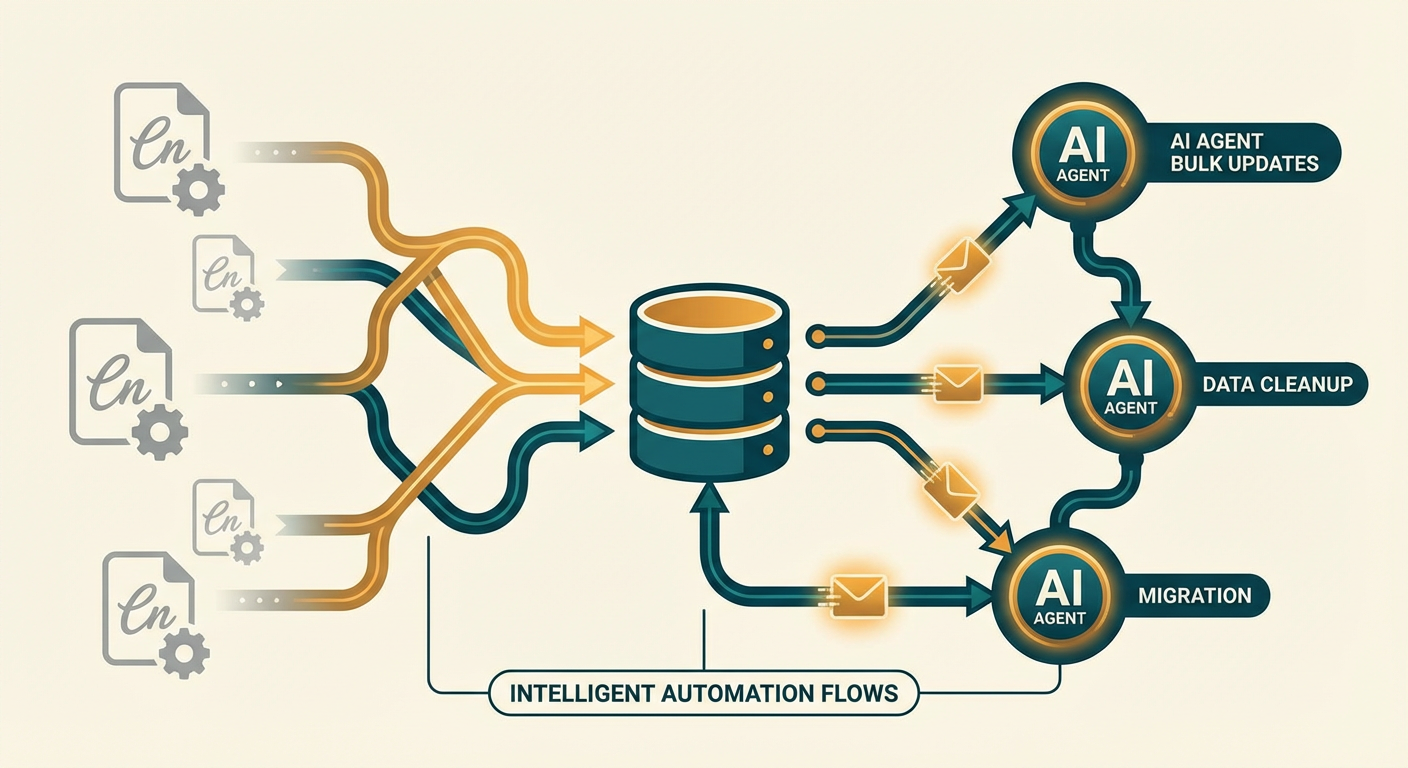

Agents Instead of Scripts

The concept is dead simple: instead of hard-coding logic for each data operation in a bespoke script, describe what you want in plain language and let an AI agent work out the implementation.

For the deduplication problem, instead of writing a script that matches contacts on email address, normalizes the format, groups duplicates, picks a primary record, and merges the others — Priya told the agent: "Find duplicate contacts in the contacts table. Match on normalized email address. Keep whichever record has the most recent activity. Merge any unique data from the dupes into the primary. Flag the duplicates for deletion but don't actually delete anything yet."

The agent inspected the table schema, figured out the relevant columns, wrote matching logic, ran it, and spit out a report: 340 duplicate groups, 340 primary records, 520 duplicates flagged. Priya looked it over, approved the merge, done. What would have been an afternoon got compressed into twenty minutes.

For the monthly reconciliation, the Supabase Database Cleanup Agent now handles ongoing detection of stale, orphaned, and duplicate records. Rather than writing and maintaining a fragile reconciliation script, Priya configured the agent with her rules and set it to run weekly checks. When the accounting system's API changes (and it will), she updates the agent's instructions in plain English instead of debugging broken parsing code.

What Changed

Six months after switching to agents for data operations, Priya's data-ops/ folder has stopped growing. The last script added was from the transition period. New data operations go through agents.

Here's what a typical week looks like now:

Bulk updates. Business team needs records changed -- new status, new tag, property modification across a filtered set? Priya describes it, the agent executes it. It uses Cotera's Supabase tools (Create Record, Read Records, Update Record, Delete Record, Upsert Record) to talk to the database. Zero scripts to write, test, or babysit.

Data cleanup. The cleanup agent runs on a schedule, looking for the patterns Priya defined: orphaned records (foreign keys pointing at deleted rows), stale records (untouched for 90+ days with no associated activity), duplicates (matching on configurable fields). It produces a report. Priya reviews it. The cleanup executes.

Cross-system sync. The Supabase Data Sync Agent keeps Supabase tables aligned with external systems. When the accounting system's data format changes, Priya updates the agent's instructions instead of debugging a broken API parser at 11 PM.

Ad-hoc queries. Support and ops people used to ping Priya in Slack asking her to write SQL queries for them. Now they describe what they're looking for, an agent builds the query, runs it against the read replica, and hands back the results. Priya gets fewer Slack messages. The support team gets faster answers.

The Numbers

I asked Priya's team to track the time savings over a quarter. The numbers were pretty clear:

Before agents, the team averaged 12 hours a week on data operations -- writing scripts, running them, checking output, fixing the ones that broke. That works out to roughly 156 hours per quarter.

After agents, the same operations took about 3 hours per week — mostly reviewing agent output and approving destructive operations. That's about 39 hours per quarter.

The difference is 117 hours per quarter. That's roughly three full work weeks of engineering time redirected from data janitor work to actual product development.

The error rate also dropped. Scripts written in a hurry, tested against a handful of records, and run against production are inherently risky. Three data incidents in the six months before agents. Zero in the six months after, because the agents produce previews of their changes before executing, and Priya reviews them before approving.

What Agents Don't Replace

Agents don't replace database migrations. Schema changes — adding columns, modifying constraints, creating indexes — still go through Priya's migration pipeline with proper version control, review, and rollback plans.

Agents don't replace monitoring. Database performance, query plans, connection pool utilization — these still need proper observability tooling.

Agents don't replace thinking about data architecture. Choosing between a normalized schema and a denormalized one, deciding on indexing strategies, designing for query patterns — this is engineering work that requires context and judgment.

What agents replace is the manual labor that sits between the architecture and the day-to-day reality of keeping data clean, consistent, and correct. That labor was eating Priya's team alive. Now it doesn't.

Stop Writing Scripts That Die

If you have a folder of data operations scripts — and if you've been building on Supabase for more than six months, you almost certainly do — count how many of them still work. Check which ones reference columns or tables that no longer exist. Tally up the hours your team sinks into writing, running, and patching them.

Then ask whether that's really how you want your engineers spending their time.

Priya's folder still exists. She keeps it around as a monument. Sixty-seven scripts that each represent a few hours of work, a specific problem, and a solution that expired. She doesn't add to it anymore. She describes what she needs, reviews what the agent proposes, and gets back to work that actually moves the product forward.

Try These Agents

- Supabase Database Cleanup Agent — Scan for and resolve duplicate, orphaned, and stale records in your Supabase database

- Supabase Data Sync Agent — Sync data between Supabase tables and external sources without brittle scripts

- Supabase Inventory Tracker — Track inventory levels, flag discrepancies, and keep stock data accurate

- Supabase Content Publisher — Create and manage content records in Supabase