We Used Notion AI for Six Months. Then We Added External Agents and Everything Changed

When Notion launched its built-in AI features, Diana was the first person on our team to try them. She's our competitive intelligence lead, and she thought Notion AI would solve her biggest problem: keeping our competitive wiki current. She was half right.

Notion AI did a good job at the stuff it's designed for. Summarizing long pages. Drafting text from bullet points. Generating tables from unstructured notes. Diana used it to turn her raw research notes into formatted competitor profiles, and it cut her formatting time roughly in half. She went from spending 40 minutes per competitor profile to about 20.

But here's what Notion AI didn't do: it didn't go find the competitive information in the first place. Diana still had to manually search competitor websites, read their blog posts, check their pricing pages, monitor their social accounts, scan review sites for customer complaints, and copy all of that into Notion before the AI could do anything with it. The AI made her faster at organizing information she already had. It did nothing about the seven hours per week she spent gathering it.

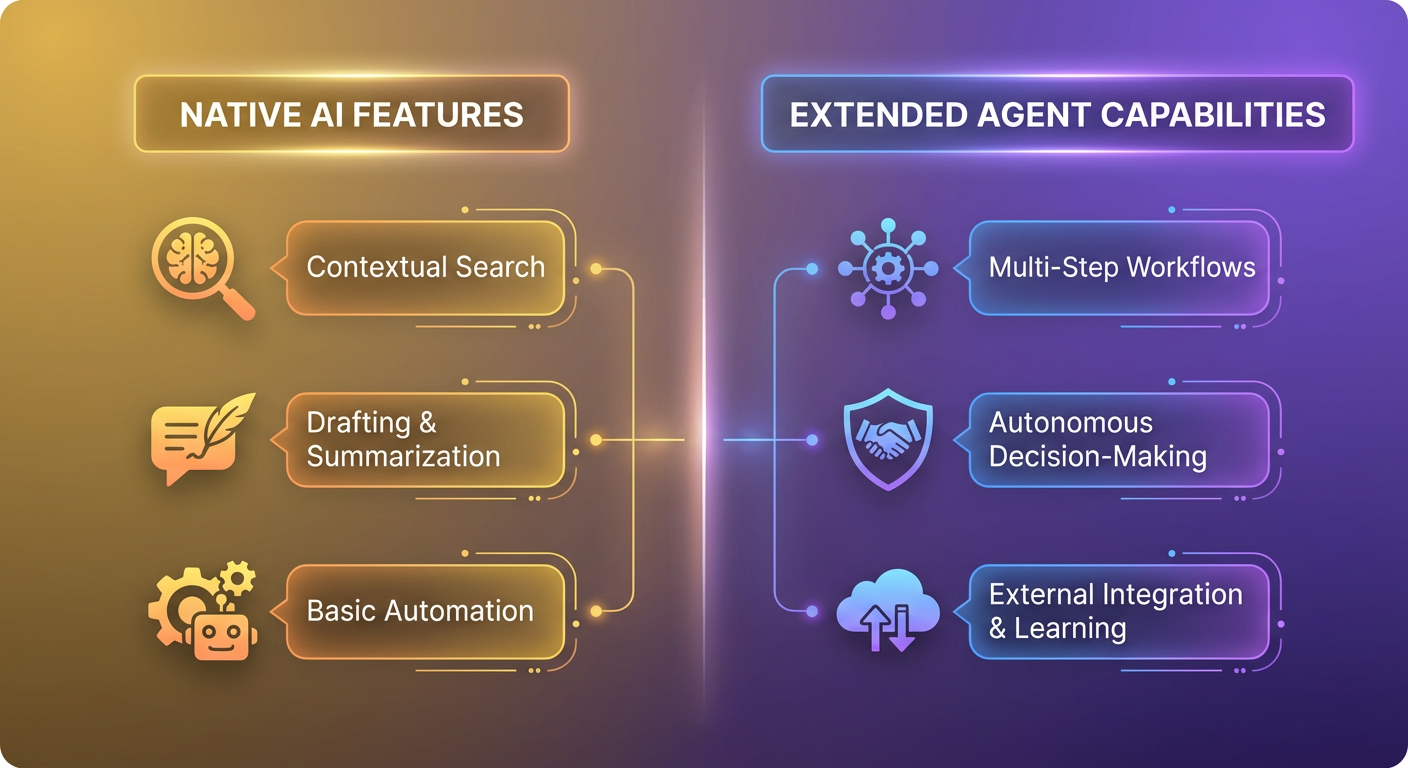

That gap between "good at processing text" and "good at running multi-step research workflows" is where external AI agents come in.

What Notion AI Actually Does Well

I want to be fair about this because Notion AI is genuinely useful for certain tasks. Our team uses it daily for three things.

Summarizing meeting notes. After a long meeting, Notion AI can condense four pages of notes into five bullet points. It's accurate about 85% of the time. The other 15%, it misses a decision or buries an action item. But it's a good first draft.

Drafting from outlines. Elena writes content briefs as bullet-point outlines in Notion. She uses the AI to expand those bullets into paragraphs. The output needs editing, always, but it gives her a starting point instead of a blank page.

Q&A on page content. You can ask Notion AI questions about the content on a page. "What were the three decisions made in this meeting?" or "What's the deadline mentioned in this doc?" It's like having Ctrl+F but smarter. Works well for long documents where you don't want to scroll through everything.

All three of these features work within a single Notion page. That's the boundary. Notion AI reads the content on the page you're looking at and does things with that content. It doesn't go outside Notion. It doesn't connect to your Salesforce instance. It doesn't check your competitors' websites. It doesn't pull data from APIs.

Where the Boundary Became a Problem

Diana's competitive intelligence workflow had five steps:

- Research competitor activity (websites, social media, review sites, press releases)

- Collect the raw information into Notion

- Organize it into a structured profile

- Analyze patterns and changes over time

- Share findings with the sales and product teams

Notion AI helped with step 3. Steps 1 and 2 were entirely manual. Steps 4 and 5 were partially manual.

She was spending about 7 hours per week on competitive research. Notion AI reduced that by maybe 90 minutes. The remaining 5+ hours were all information gathering and cross-referencing that required going outside Notion.

Kenji had a similar problem on the product side. He wanted to build a feature comparison matrix that automatically updated when competitors shipped new features. Notion AI could help him format the matrix. It couldn't tell him when a competitor launched something new.

What External AI Agents Add

An AI agent isn't limited to the content on a single Notion page. It can search the web, pull data from APIs, read competitor websites, check review sites, and then write the results into Notion. It works across tools instead of within one tool.

We set up a competitive intelligence wiki builder that runs weekly. The agent researches each competitor by searching the web for recent news, checking their website for changes, and pulling review data. Then it creates or updates pages in our Notion competitive intel database with structured profiles: what changed this week, new features spotted, pricing updates, notable customer reviews, and hiring signals.

Diana's reaction after the first week: "This is what I thought Notion AI was going to be."

The agent doesn't replace Notion AI. It feeds it. The agent gathers and structures the raw information. Notion AI helps Diana query and summarize it afterward. They work at different layers of the same workflow.

The Competitive Intel Wiki in Practice

The wiki tracks eight competitors. Before the agent, Diana updated each competitor profile manually about once every two weeks. Some competitors went a month between updates. By the time she got to competitor number eight, the information on competitor number one was already stale.

Now the agent updates all eight profiles weekly. Each profile page in Notion gets new blocks appended with that week's findings: any press mentions, product changes visible on their website, new job postings that signal strategic direction, and customer review trends.

Tomás, who runs our sales team, uses the wiki before every competitive deal. He told me he used to ask Diana to "get me the latest on [competitor]" about three times a week. Each request took Diana 30-45 minutes to research and compile. Now Tomás opens the Notion wiki and the information is already there, updated within the last seven days.

The wiki also surfaces patterns that manual research missed. The agent noticed that one competitor had posted seven engineering job listings mentioning "AI" in a two-week span. That signal would have taken Diana weeks to spot manually, if she spotted it at all. By the time she got to checking that competitor's job board, the listings might have been filled and removed.

Cross-Tool Workflows That Notion AI Can't Touch

Competitive intelligence was the first use case, but the pattern applies broadly. Any workflow that requires data from multiple sources before writing to Notion is a candidate for an external agent.

Our marketing team needed a workflow that monitors competitor content, analyzes it, and populates a Notion database with content gap analysis. Notion AI can't browse competitor blogs. An agent can.

Our sales team needed meeting prep docs in Notion that included LinkedIn research, company news, and CRM data. Notion AI can't access LinkedIn or Salesforce. An agent with the right pre-meeting research setup can pull that data and write it directly into a Notion page.

Our ops team needed project status pages that aggregated information from Slack conversations, Google Sheets timelines, and Jira tickets. Notion AI can't read your Slack history. An agent can search Slack, read the relevant threads, and sync the summary into Notion.

The pattern is the same every time. Notion AI is a single-page tool. Agents are multi-tool orchestrators. If your workflow lives entirely within one Notion page, the built-in AI is probably enough. If your workflow requires pulling information from anywhere else, you need something that can reach outside Notion's walls.

How We Think About It Now

We stopped framing this as "Notion AI versus external agents." It's "Notion AI for text processing, agents for data workflows." Different jobs.

Diana still uses Notion AI every day. She uses it to summarize the weekly competitor updates the agent writes. She uses it to generate comparison tables from the data the agent collects. She uses it to draft the competitive brief she sends to the exec team every month. All valid uses.

What she doesn't do anymore is spend seven hours per week copying information from browser tabs into Notion. The agent does the gathering. Notion AI does the polishing. Diana does the thinking: what does this competitive data actually mean, and what should we do about it?

Her competitive research time dropped from 7 hours per week to about 2. The quality went up because the agent checks more sources more frequently than Diana could alone. And the wiki is always current, which means the sales team stopped asking her for ad-hoc research.

The 2 hours she still spends? That's analysis, strategy, and judgment. The parts of competitive intelligence that actually require a human.

Try These Agents

- Competitive Intelligence Wiki Builder -- Build and maintain a competitive intel wiki in Notion with automated weekly research

- Pre-Meeting Research to Notion -- Create structured meeting prep docs in Notion using LinkedIn and CRM data

- Notion Meeting Notes to Slack -- Sync Notion meeting notes to Slack channels after every call

- Notion Salesforce Deal Tracker -- Keep competitive deal data in Notion current with Salesforce syncing